Manager-Led AI Adoption Starts in the Middle

Most AI strategies break in the same place: the middle of the organisation, where managers sit between a bold slide deck and overwhelmed teams.

Across Gallup, EY and Microsoft’s Work Trend Index, the pattern is remarkably consistent. AI tools are available, employees are curious, executives are enthusiastic, yet only a small slice of organisations see material productivity gains. The missing capability is not another model or pilot app. It is managers who know how to weave AI into real workflows, safely and visibly, and then lead their teams through the change.

This is where manager-led AI adoption becomes the real competitive advantage.

The data problem: AI usage is up, meaningful change is not

Over the last two years, AI access has exploded. Microsoft’s 2024 Work Trend Index reports that roughly three quarters of global knowledge workers now use generative AI in some form, with many of them adopting it informally and bringing their own tools into work. At the same time, executives overwhelmingly say that AI is essential for staying competitive, but a majority admit they lack a clear plan for how to translate AI experiments into measurable business outcomes.

Gallup’s research on AI at work adds an important nuance. When organisations roll out AI, most employees still use it for lightweight tasks such as search and summarisation, rather than redesigning how work flows through a team. A large group of non‑users say the core issue is relevance. They simply do not see how AI can assist with the work they actually do. Others hold back because they worry about legal and privacy risk or because they do not feel they have the skills to use AI credibly in front of colleagues.

EY’s Work Reimagined studies point in the same direction. Organisations have invested heavily in technology but are still leaving a significant portion of productivity gains on the table. The blockers are not just financial or technical. They are organisational, cultural and managerial.

When you put these threads together, a clear picture emerges. AI capability in the tools is running far ahead of AI capability in management.

Why managers are the real AI force multiplier

Gallup’s “Manager Support Drives Employee AI Adoption” work is blunt. Employees who strongly agree that their manager actively supports AI are far more likely to use AI frequently, are dramatically more likely to say it helps them do their best work, and are much more likely to feel that AI creates room for them to use their strengths each day.

The logic is simple in practice:

- Executives can announce a Copilot or automation programme, but employees experience it through their manager.

- Employees infer safety from the person who reviews their work. If a manager looks unsure, hesitant or quietly sceptical, the team slows down.

- If a manager can sit in a planning session and say, “For this month’s reporting, AI will draft the narrative and you will stay firmly on the hook for the numbers and the judgement,” everyone suddenly knows where they stand.

Managers set the local rules of engagement. That is why manager-led AI adoption is so powerful for SMEs and mid‑market organisations, where culture and workflow are heavily shaped by a relatively small layer of middle leadership.

Four hidden barriers managers face with AI

If managers are the lever, why are so many of them struggling to pull it? Across the research and client conversations, four consistent barriers show up.

1. Relevance

“I do not see where this fits my team’s work”

Many managers still perceive AI as a generic assistant. Without concrete examples (e.g., using n8n to auto-compile status updates or AI for variance analysis), they default to "nice to have."

2. Safety

“What if my team gets us in trouble?”

Managers worry about breaching GDPR or internal data rules. Without simple guardrails, the safest path is to quietly avoid using AI on anything that matters.

3. Capability

“I am meant to lead this, but I am not an expert”

Training explains buttons, not judgement. Managers need to know what "good enough" looks like and how to stitch AI into workflows without breaking them.

4. Metrics

“I am being measured on the wrong things”

Obsessing over prompt counts leads to "toy" usage. Managers need to be accountable for outcomes like cycle time and error rates, not login frequency.

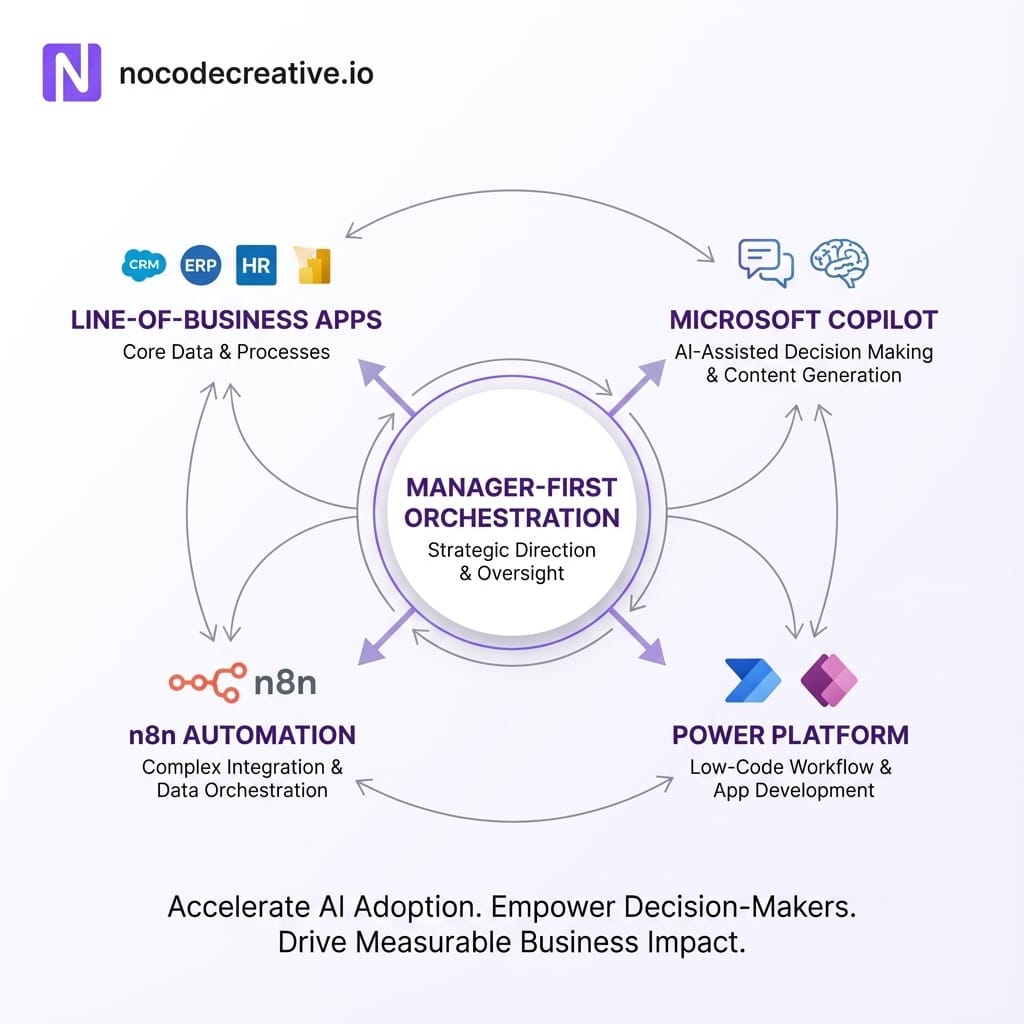

Designing a manager-first AI enablement programme

If you accept that AI success rests on managers, the design of your rollout changes. Instead of training thousands of end users first, you start with a focused cohort of frontline and middle managers and give them a structured path to build competence and confidence.

A practical pattern we see working in SMEs and mid‑market organisations is a 6 to 8 week manager-first AI enablement programme built around three pillars:

Guardrails and judgement

Start with a short, engaging workshop for managers that covers:

- What types of data are safe in your approved AI tools, and what are not (UK/International contexts).

- How to review AI output, including examples of strong, weak, and risky responses.

- Clear expectations: AI can draft, summarise and suggest, but humans remain accountable.

Tip: Use simple, visual frameworks. For example, a red / amber / green data classification or a one‑page “AI charter” for each team.

Workflow identification and prototyping

In weeks 3 and 4, ask each manager to choose two or three recurring workflows that hurt the most. Good candidates are:

- Status reporting and “update” emails.

- Customer responses that follow familiar patterns.

- Internal documentation, FAQs, playbooks or training material.

- Data analysis that always starts from the same spreadsheet or report.

With light support from IT or an automation partner, managers then prototype new versions of these workflows using Microsoft 365 Copilot, Power Platform, or n8n. The point is to give the manager a tangible, local example, not a generic AI showcase.

Team experiments and outcome tracking

In weeks 5 to 8, managers run small, low‑risk experiments with their teams. They:

- Introduce the AI‑enhanced workflow.

- Agree on what will not change (e.g., approvals remain manual).

- Track concrete metrics such as turnaround time or volume of rework.

Managers then bring these experiments to a monthly “AI playbook” session where they share patterns, pitfalls and templateable flows.

Concrete workflow examples using Copilot, Power Platform and n8n

To make this less abstract, here are cross‑functional examples that reflect what we design for clients.

Operations: Shorter cycles and fewer handoffs

An operations manager in a logistics firm notices that weekly performance updates take half a day of collating spreadsheets, emails and system reports.

- An n8n workflow pulls data from the transport management system, warehouse system and CRM on a schedule, and writes snapshots into a structured data store or SharePoint list.

- Power BI or Excel generates standard views, which Copilot then turns into narrative summaries, highlighting outliers.

- Copilot drafts the manager’s update to stakeholders; the manager applies judgement before sending.

Finance: Accelerating month‑end without losing control

Finance managers are under pressure to close the books faster, particularly under UK and international reporting standards, yet much of the work is repetitive.

- Mapping the month‑end close checklist and tagging steps that are narrative or reconciliation heavy.

- Using Power Automate or n8n to extract trial balance snapshots and variance reports.

- Applying Copilot in Excel or Power BI to draft variance explanations.

- Keeping a clear rule that AI drafts are starting points only.

Customer support: Manager-led triage and quality control

Support leaders often leap straight to AI chatbots. A safer, manager-first approach starts with AI in the back office.

- New tickets trigger a flow; AI summarises the ticket and proposes a first‑draft reply.

- Tickets with risk markers (VIP, legal keywords) are auto-routed to specialist queues for human‑only handling.

- Managers review AI‑suggested replies side by side with human originals to adjust thresholds.

Sales: Smarter preparation, not autopilot selling

Sales leaders are nervously avoiding AI “writing” outreach. A manager-led pattern keeps humans in control.

- An n8n flow compiles context from email threads and CRM notes into a consolidated brief.

- Copilot drafts call preparation notes and rough agendas.

- After meetings, Copilot helps generate concise summaries and action lists.

Measuring success through outcomes, not usage

If you want managers to take AI seriously, you need to measure what they care about. Useful metrics at manager and team level include:

- Cycle time: How long does it take to complete a standard process, such as onboarding or closing books?

- Rework and error rates: How often do items bounce back due to missing information or poor quality?

- Manual touchpoints: How many times must a human intervene with copy‑paste work?

- Experience: Are CSAT, NPS or internal engagement scores improving in AI-assisted workflows?

You can still monitor usage for basic hygiene, but it should sit behind outcome metrics. Instead of “why is your team not using Copilot more?” ask “how could AI help you take two days out of your month‑end cycle?”

Building simple guardrails managers can actually use

Manager-led AI adoption works only when managers feel safe. A practical pattern is to build an “AI charter” for each team, co‑designed by IT and managers.

- Data rules in plain language:

- Green: Public content, internal templates.

- Amber: Customer data, financials (approved systems only).

- Red: Special category personal data, health records.

- Use cases: Explicitly state what is encouraged versus prohibited.

- Review thresholds: When must AI output be second‑checked?

- Incident handling: Simple steps for accidental data exposure.

Supporting hesitant and overloaded managers

Treating managers as one homogeneous group makes adoption harder. Instead:

- Enthusiasts: Give them guardrails and let them pair with cautious peers.

- Quietly cautious managers: Offer small, low‑risk experiments and one-to-one clinics.

- Stretched and sceptical managers: Start by reducing existing burden (e.g., cleaning up documentation) to buy back their time.

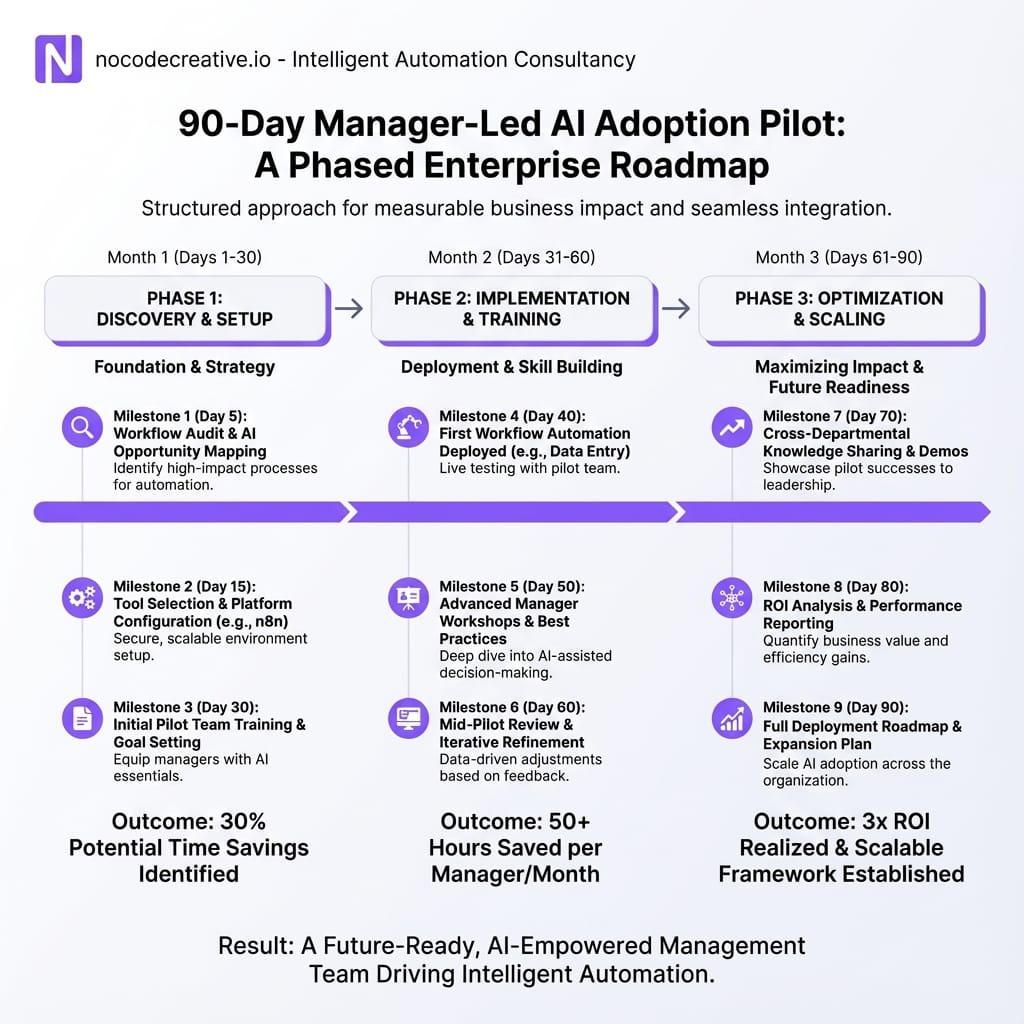

A 90 day roadmap for a manager-led AI pilot

To make this real, here is a simple way to structure a first phase in one function or business unit.

Days 1 to 30

Choose the arena and set the rules

Identify 10-20 willing managers. Run a high-quality session on AI basics. Co-create initial AI charters and select two or three target processes per manager.

Days 31 to 60

Build and test manager-owned workflows

Use Copilot, Power Platform and n8n to prototype. Provide "solution accelerators" (prompt libraries, templates). Maintain tight feedback loops.

Days 61 to 90

Prove value and codify the playbook

Run workflows in parallel with real work. Refine prompts. Host "show and tell" sessions. Codify successful patterns into a playbook.

Treat manager AI capability as infrastructure, not training

For most organisations, especially SMEs and mid‑market firms, AI will not fail because of models, licences or missing features. It will fail quietly because managers never get the time, tools or support to redesign work with AI as a serious ingredient.

A manager-first approach reframes AI from “something IT is rolling out” to “a new dimension of managerial capability” that sits alongside coaching, prioritisation and resource planning. It recognises that the real leverage point is the day‑to‑day decisions managers make about how work flows through their teams.

If you start there, with real workflows, pragmatic guardrails and outcome‑based metrics, AI stops being a buzzword in a board pack and starts becoming part of how your organisation actually runs.

Ready to start your manager-led AI programme?

If you want help designing or delivering a manager-led AI adoption programme, our expert AI and automation consultants are available for focused conversations on your specific context and stack.

References

- Want AI To Succeed? Start With Managers Succeeding At AI First (Forbes)

- Manager Support Drives Employee AI Adoption (Gallup Workplace)

- AI at Work Is Here, Now Comes the Hard Part – 2024 Work Trend Index (Microsoft WorkLab)

- 2024 Annual Work Trend Index from Microsoft and LinkedIn (Overview)

- Work reimagined: How to prepare for ‘renaissance and recommitment’ (EY)

Discussion